How To Draw A Random Sample From A Specific Distribution

This book is in Open Review. We desire your feedback to make the book ameliorate for you and other students. Y'all may comment some text by selecting it with the cursor and so click the on the popular-upward menu. You tin also meet the annotations of others: click the in the upper right hand corner of the folio

Random Sampling and the Distribution of Sample Averages

To clarify the basic idea of random sampling, let us spring back to the dice rolling example:

Suppose we are rolling the die \(n\) times. This means we are interested in the outcomes of random \(Y_i, \ i=1,...,n\) which are characterized by the aforementioned distribution. Since these outcomes are selected randomly, they are random variables themselves and their realizations will differ each time we describe a sample, i.e., each time we roll the dice \(northward\) times. Furthermore, each observation is randomly drawn from the same population, that is, the numbers from \(i\) to \(half dozen\), and their private distribution is the aforementioned. Hence \(Y_1,\dots,Y_n\) are identically distributed.

Moreover, we know that the value of any of the \(Y_i\) does not provide any data on the remainder of the outcomes In our example, rolling a half dozen every bit the first observation in our sample does not alter the distributions of \(Y_2,\dots,Y_n\): all numbers are equally likely to occur. This means that all \(Y_i\) are as well independently distributed. Thus \(Y_1,\dots,Y_n\) are independently and identically distributed (i.i.d.). The dice case uses this most unproblematic sampling scheme. That is why it is called simple random sampling. This concept is summarized in Key Concept two.five.

Key Concept two.5

Simple Random Sampling and i.i.d. Random Variables

In simple random sampling, \(n\) objects are drawn at random from a population. Each object is as likely to end up in the sample. We denote the value of the random variable \(Y\) for the \(i^{th}\) randomly fatigued object equally \(Y_i\). Since all objects are equally probable to be drawn and the distribution of \(Y_i\) is the same for all \(i\), the \(Y_i, \dots, Y_n\) are independently and identically distributed (i.i.d.). This means the distribution of \(Y_i\) is the aforementioned for all \(i=1,\dots,n\) and \(Y_1\) is distributed independently of \(Y_2, \dots, Y_n\) and \(Y_2\) is distributed independently of \(Y_1, Y_3, \dots, Y_n\) and and so forth.

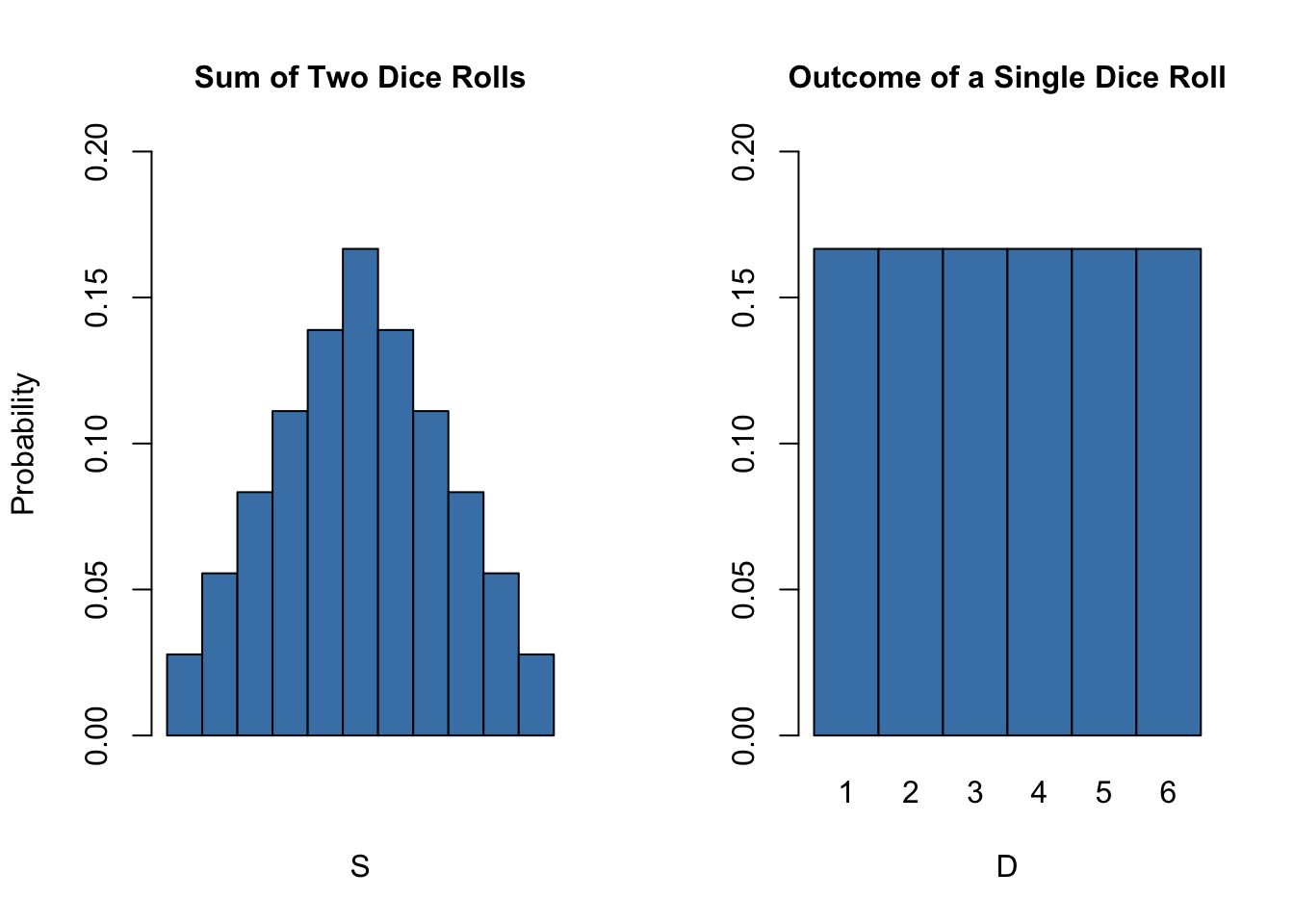

What happens if we consider functions of the sample information? Consider the example of rolling a die ii times in a row once again. A sample now consists of 2 contained random draws from the fix \(\{1,two,3,four,v,6\}\). It is apparent that any office of these two random variables, east.g. their sum, is also random. Convince yourself past executing the code below several times.

sum(sample(i : vi, 2, replace = T)) #> [ane] 7 Conspicuously, this sum, let united states of america telephone call it \(S\), is a random variable as information technology depends on randomly drawn summands. For this example, we can completely enumerate all outcomes and hence write down the theoretical probability distribution of our function of the sample data \(S\):

We face \(6^2=36\) possible pairs. Those pairs are

\[\begin{align*} &(1,i) (1,2) (ane,3) (1,four) (1,5) (1,6) \\ &(2,1) (2,2) (2,3) (2,4) (two,5) (2,six) \\ &(3,1) (three,2) (3,3) (3,iv) (3,5) (three,6) \\ &(4,1) (4,two) (four,3) (4,4) (4,v) (4,6) \\ &(5,ane) (5,ii) (5,3) (5,4) (5,5) (5,half dozen) \\ &(vi,1) (6,2) (6,3) (6,4) (6,5) (6,half-dozen) \end{align*}\]

Thus, possible outcomes for \(S\) are

\[ \left\{ 2,3,4,5,6,7,8,9,ten,eleven,12 \right\} . \] Enumeration of outcomes yields

\[\begin{align} P(Due south) = \begin{cases} i/36, \ & S = 2 \\ ii/36, \ & Due south = 3 \\ 3/36, \ & S = iv \\ 4/36, \ & S = 5 \\ five/36, \ & S = half-dozen \\ vi/36, \ & Southward = seven \\ 5/36, \ & Due south = 8 \\ 4/36, \ & Due south = nine \\ 3/36, \ & South = 10 \\ 2/36, \ & Southward = 11 \\ i/36, \ & S = 12 \end{cases} \end{align}\]

We can also compute \(East(S)\) and \(\text{Var}(Due south)\) as stated in Key Concept ii.i and Primal Concept two.2.

# Vector of outcomes S <- 2 : 12 # Vector of probabilities PS <- c(i : 6, 5 : 1) / 36 # Expectation of S ES <- sum(S * PS) ES #> [1] 7 # Variance of S VarS <- sum((S - c(ES))^ 2 * PS) VarS #> [i] 5.833333 Then the distribution of \(Southward\) is known. It is besides evident that its distribution differs considerably from the marginal distribution, i.e,the distribution of a single dice roll's outcome, \(D\) . Let united states visualize this using bar plots.

# divide the plotting area into one row with two columns par(mfrow = c(ane, 2)) # plot the distribution of S barplot(PS, ylim = c(0, 0.2), xlab = "S", ylab = "Probability", col = "steelblue", space = 0, main = "Sum of Ii Dice Rolls") # plot the distribution of D probability <- rep(1 / vi, 6) names(probability) <- one : half-dozen barplot(probability, ylim = c(0, 0.ii), xlab = "D", col = "steelblue", infinite = 0, master = "Event of a Single Dice Roll")

Many econometric procedures deal with averages of sampled data. It is typically assumed that observations are drawn randomly from a larger, unknown population. As demonstrated for the sample function \(South\), calculating an average of a random sample has the effect that the boilerplate is a random variable itself. This random variable in turn has a probability distribution, called the sampling distribution. Cognition about the sampling distribution of the average is therefore crucial for understanding the performance of econometric procedures.

The sample boilerplate of a sample of \(n\) observations \(Y_1, \dots, Y_n\) is

\[ \overline{Y} = \frac{i}{n} \sum_{i=1}^n Y_i = \frac{1}{due north} (Y_1 + Y_2 + \cdots + Y_n). \] \(\overline{Y}\) is also called the sample mean.

Mean and Variance of the Sample Hateful

suppose that \(Y_1,\dots,Y_n\) are i.i.d. and denote \(\mu_Y\) and \(\sigma_Y^2\) equally the hateful and the variance of the \(Y_i\). Then we have that

\[ E(\overline{Y}) = E\left(\frac{1}{northward} \sum_{i=1}^due north Y_i \correct) = \frac{i}{n} E\left(\sum_{i=1}^north Y_i\right) = \frac{1}{n} \sum_{i=1}^n Due east\left(Y_i\right) = \frac{i}{n} \cdot due north \cdot \mu_Y = \mu_Y \] and

\[\begin{align*} \text{Var}(\overline{Y}) =& \text{Var}\left(\frac{one}{n} \sum_{i=1}^n Y_i \correct) \\ =& \frac{ane}{n^2} \sum_{i=1}^n \text{Var}(Y_i) + \frac{ane}{northward^2} \sum_{i=i}^n \sum_{j=i, j\neq i}^northward \text{cov}(Y_i,Y_j) \\ =& \frac{\sigma^2_Y}{due north} \\ =& \sigma_{\overline{Y}}^two. \cease{align*}\]

The second summand vanishes since \(\text{cov}(Y_i,Y_j)=0\) for \(i\neq j\) due to independence. Consequently, the standard deviation of the sample mean is given by \[\sigma_{\overline{Y}} = \frac{\sigma_Y}{\sqrt{n}}.\]

It is worthwhile to mention that these results concord irrespective of the underlying distribution of the \(Y_i\).

The Sampling Distribution of \(\overline{Y}\) when \(Y\) Is Normally Distributed

If the \(Y_1,\dots,Y_n\) are i.i.d. draws from a normal distribution with hateful \(\mu_Y\) and variance \(\sigma_Y^two\), the following holds for their sample average \(\overline{Y}\):

\[ \overline{Y} \sim \mathcal{N}(\mu_Y, \sigma_Y^two/n) \tag{ii.4} \]

For example, if a sample \(Y_i\) with \(i=1,\dots,ten\) is drawn from a standard normal distribution with mean \(\mu_Y = 0\) and variance \(\sigma_Y^2=1\) we take

\[ \overline{Y} \sim \mathcal{North}(0,0.1).\]

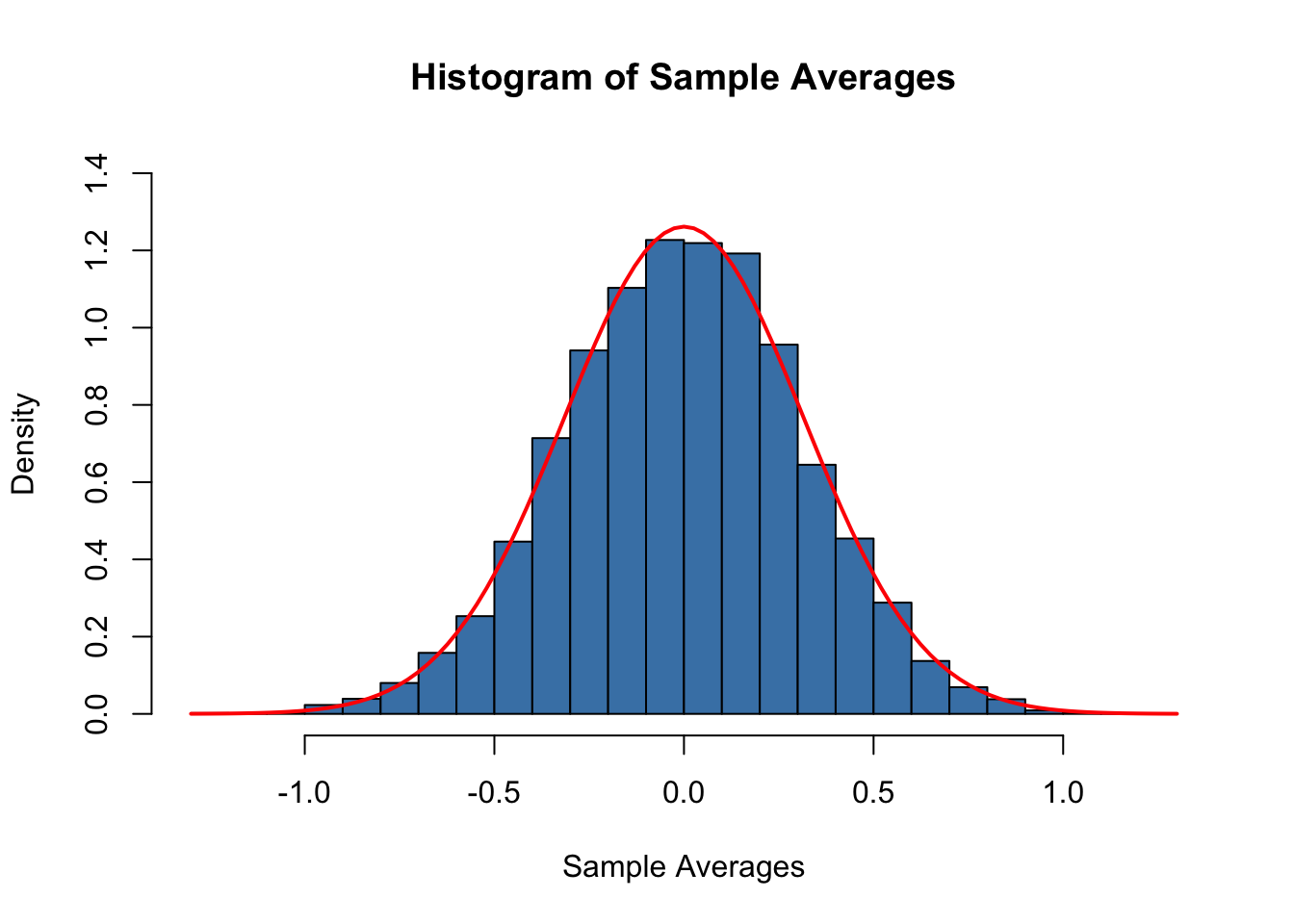

Nosotros tin use R's random number generation facilities to verify this consequence. The bones idea is to simulate outcomes of the truthful distribution of \(\overline{Y}\) past repeatedly cartoon random samples of 10 observation from the \(\mathcal{Due north}(0,ane)\) distribution and calculating their corresponding averages. If we exercise this for a large number of repetitions, the fake data set up of averages should quite accurately reflect the theoretical distribution of \(\overline{Y}\) if the theoretical outcome holds.

The arroyo sketched above is an example of what is ordinarily known equally Monte Carlo Simulation or Monte Carlo Experiment. To perform this simulation in R, we proceed as follows:

-

Choose a sample size n and the number of samples to be drawn, reps.

-

Use the part replicate() in conjunction with rnorm() to describe n observations from the standard normal distribution rep times.

Note: the upshot of replicate() is a matrix with dimensions northward \(\times\) rep. It contains the drawn samples as columns.

-

Compute sample means using colMeans(). This function computes the mean of each column, i.due east., of each sample and returns a vector.

# prepare sample size and number of samples due north <- 10 reps <- 10000 # perform random sampling samples <- replicate(reps, rnorm(due north)) # x x 10000 sample matrix # compute sample ways sample.avgs <- colMeans(samples) Nosotros then end up with a vector of sample averages. You can check the vector property of sample.avgs:

# cheque that 'sample.avgs' is a vector is.vector(sample.avgs) #> [i] True # impress the first few entries to the console head(sample.avgs) #> [1] -0.1045919 0.2264301 0.5308715 -0.2243476 0.2186909 0.2564663 A straightforward approach to examine the distribution of univariate numerical information is to plot it as a histogram and compare it to some known or assumed distribution. Past default, hist() volition give u.s. a frequency histogram, i.e., a bar chart where observations are grouped into ranges, also chosen bins. The ordinate reports the number of observations falling into each of the bins. Instead, we want it to report density estimates for comparing purposes. This is accomplished by setting the argument freq = Simulated. The number of bins is adjusted by the statement breaks.

Using curve(), we overlay the histogram with a ruddy line, the theoretical density of a \(\mathcal{N}(0, 0.one)\) random variable. Remember to utilize the argument add = TRUE to add the curve to the current plot. Otherwise R volition open a new graphic device and discard the previous plot!3

# Plot the density histogram hist(sample.avgs, ylim = c(0, 1.4), col = "steelblue" , freq = F, breaks = 20) # overlay the theoretical distribution of sample averages on height of the histogram curve(dnorm(ten, sd = 1 / sqrt(n)), col = "blood-red", lwd = "two", add = T)

The sampling distribution of \(\overline{Y}\) is indeed very close to that of a \(\mathcal{N}(0, 0.1)\) distribution so the Monte Carlo simulation supports the theoretical claim.

Allow us talk over another example where using simple random sampling in a simulation setup helps to verify a well known result. As discussed before, the Chi-squared distribution with \(M\) degrees of liberty arises as the distribution of the sum of \(M\) independent squared standard normal distributed random variables.

To visualize the claim stated in equation (2.iii), we go on similarly as in the example earlier:

- Choose the degrees of liberty, DF, and the number of samples to be fatigued reps.

- Depict reps random samples of size DF from the standard normal distribution using replicate().

- For each sample, square the outcomes and sum them up column-wise. Store the results.

Again, nosotros produce a density estimate for the distribution underlying our simulated data using a density histogram and overlay information technology with a line graph of the theoretical density office of the \(\chi^2_3\) distribution.

# number of repetitions reps <- 10000 # gear up degrees of liberty of a chi-Square Distribution DF <- 3 # sample 10000 column vectors à iii Due north(0,1) R.V.S Z <- replicate(reps, rnorm(DF)) # column sums of squares X <- colSums(Z^ 2) # histogram of column sums of squares hist(Ten, freq = F, col = "steelblue", breaks = xl, ylab = "Density", main = "") # add together theoretical density curve(dchisq(x, df = DF), blazon = 'l', lwd = ii, col = "red", add = T)

Large Sample Approximations to Sampling Distributions

Sampling distributions as considered in the last department play an important role in the development of econometric methods. There are two main approaches in characterizing sampling distributions: an "exact" approach and an "gauge" approach.

The verbal approach aims to find a general formula for the sampling distribution that holds for any sample size \(northward\). Nosotros phone call this the exact distribution or finite-sample distribution. In the previous examples of dice rolling and normal variates, we have dealt with functions of random variables whose sample distributions are exactly known in the sense that we can write them down as analytic expressions. However, this is not e'er possible. For \(\overline{Y}\), result (ii.4) tells us that normality of the \(Y_i\) implies normality of \(\overline{Y}\) (we demonstrated this for the special instance of \(Y_i \overset{i.i.d.}{\sim} \mathcal{N}(0,one)\) with \(n=10\) using a simulation study that involves simple random sampling). Unfortunately, the verbal distribution of \(\overline{Y}\) is generally unknown and often hard to derive (or even untraceable) if we drop the assumption that the \(Y_i\) take a normal distribution.

Therefore, as can be guessed from its name, the "approximate" approach aims to find an approximation to the sampling distribution where it is required that the sample size \(due north\) is large. A distribution that is used as a large-sample approximation to the sampling distribution is as well called the asymptotic distribution. This is due to the fact that the asymptotic distribution is the sampling distribution for \(n \rightarrow \infty\), i.e., the approximation becomes exact if the sample size goes to infinity. However, the divergence betwixt the sampling distribution and the asymptotic distribution is negligible for moderate or even small samples sizes so that approximations using the asymptotic distribution are useful.

In this section we volition hash out two well known results that are used to approximate sampling distributions and thus constitute key tools in econometric theory: the police force of large numbers and the cardinal limit theorem. The law of big numbers states that in big samples, \(\overline{Y}\) is shut to \(\mu_Y\) with high probability. The key limit theorem says that the sampling distribution of the standardized sample average, that is, \((\overline{Y} - \mu_Y)/\sigma_{\overline{Y}}\) is asymptotically unremarkably distributed. It is peculiarly interesting that both results do not depend on the distribution of \(Y\). In other words, existence unable to draw the complicated sampling distribution of \(\overline{Y}\) if \(Y\) is non normal, approximations of the latter using the central limit theorem simplify the development and applicability of econometric procedures enormously. This is a fundamental component underlying the theory of statistical inference for regression models. Both results are summarized in Key Concept 2.vi and Key Concept ii.7.

Fundamental Concept 2.half dozen

Convergence in Probability, Consistency and the Law of Large Numbers

The sample average \(\overline{Y}\) converges in probability to \(\mu_Y\): \(\overline{Y}\) is consequent for \(\mu_Y\) if the probability that \(\overline{Y}\) is in the range \((\mu_Y - \epsilon)\) to \((\mu_Y + \epsilon)\) becomes capricious shut to \(1\) as \(n\) increases for any abiding \(\epsilon > 0\). We write this as

\[ P(\mu_Y-\epsilon \leq \overline{Y} \leq \mu_Y + \epsilon) \rightarrow 1, \, \epsilon > 0 \text{ as } n\rightarrow\infty. \]

Consider the independently and identically distributed random variables \(Y_i, i=one,\dots,northward\) with expectation \(E(Y_i)=\mu_Y\) and variance \(\text{Var}(Y_i)=\sigma^2_Y\). Nether the condition that \(\sigma^2_Y< \infty\), that is, large outliers are unlikely, the law of large numbers thus states that

\[ \overline{Y} \xrightarrow[]{p} \mu_Y. \]

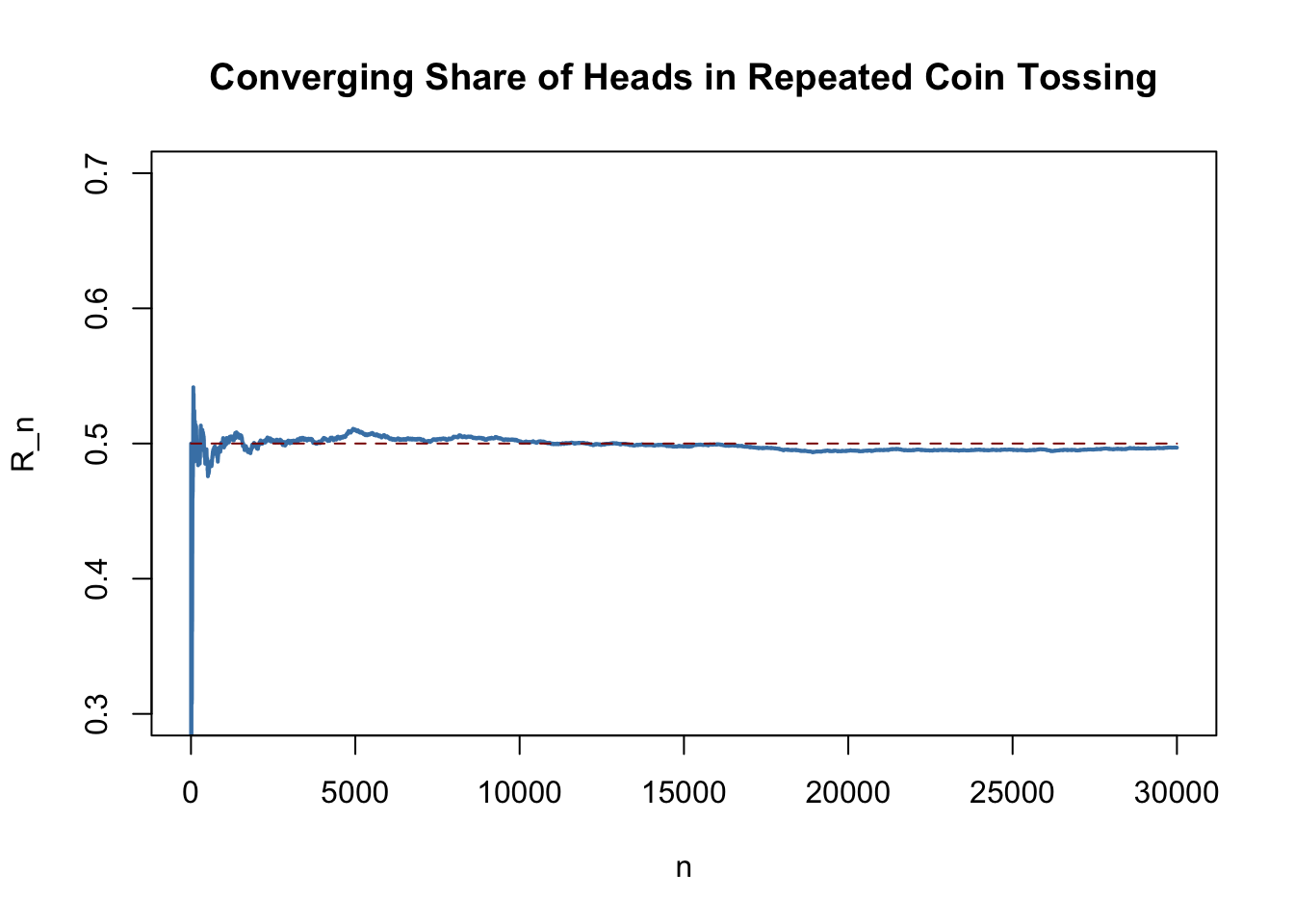

The following application simulates a large number of coin tosses (you lot may prepare the number of trials using the slider) with a fair coin and computes the fraction of heads observed for each boosted toss. The result is a random path that, equally stated by the law of big numbers, shows a tendency to approach the value of \(0.5\) as \(n\) grows.

The core argument of the police of big numbers is that under quite full general weather, the probability of obtaining a sample average \(\overline{Y}\) that is close to \(\mu_Y\) is loftier if we have a large sample size.

Consider the instance of repeatedly tossing a coin where \(Y_i\) is the result of the \(i^{thursday}\) coin toss. \(Y_i\) is a Bernoulli distributed random variable with \(p\) the probability of observing head \[ P(Y_i) = \begin{cases} p, & Y_i = ane \\ 1-p, & Y_i = 0 \finish{cases} \] where \(p = 0.5\) as we assume a fair coin. It is straightforward to show that

\[ \mu_Y = p = 0.five. \] Permit \(R_n\) denote the proportion of heads in the first \(northward\) tosses,

\[ R_n = \frac{1}{north} \sum_{i=1}^n Y_i. \tag{2.5}\]

According to the police force of large numbers, the observed proportion of heads converges in probability to \(\mu_Y = 0.v\), the probability of tossing caput in a single coin toss, \[ R_n \xrightarrow[]{p} \mu_Y=0.5 \ \ \text{as} \ \ n \rightarrow \infty.\] This effect is illustrated past the interactive application in Key Concept 2.half dozen. We now evidence how to replicate this using R.

The procedure is as follows:

- Sample North observations from the Bernoulli distribution, e.g., using sample().

- Calculate the proportion of heads \(R_n\) equally in (2.5). A way to achieve this is to call cumsum() on the vector of observations Y to obtain its cumulative sum and then divide by the corresponding number of observations.

We continue by plotting the path and too add together a dashed line for the benchmark probability \(p = 0.5\).

# set seed set.seed(1) # set up number of coin tosses and simulate Due north <- 30000 Y <- sample(0 : 1, Due north, replace = T) # Summate R_n for i:North S <- cumsum(Y) R <- Southward/(1 :N) # Plot the path. plot(R, ylim = c(0.3, 0.7), type = "l", col = "steelblue", lwd = 2, xlab = "n", ylab = "R_n", main = "Converging Share of Heads in Repeated Coin Tossing") # Add a dashed line for R_n = 0.5 lines(c(0, N), c(0.5, 0.5), col = "darkred", lty = 2, lwd = 1)

There are several things to be said nigh this plot.

-

The blue graph shows the observed proportion of heads when tossing a coin \(n\) times.

-

Since the \(Y_i\) are random variables, \(R_n\) is a random variate, too. The path depicted is only one of many possible realizations of \(R_n\) equally information technology is determined by the \(30000\) observations sampled from the Bernoulli distribution.

-

If the number of coin tosses \(north\) is modest, the proportion of heads may be anything only close to its theoretical value, \(\mu_Y = 0.5\). However, as more and more than observation are included in the sample we find that the path stabilizes in the neighborhood of \(0.5\). The average of multiple trials shows a clear tendency to converge to its expected value as the sample size increases, merely as claimed past the law of big numbers.

Key Concept two.vii

The Central Limit Theorem

Suppose that \(Y_1,\dots,Y_n\) are independently and identically distributed random variables with expectation \(E(Y_i)=\mu_Y\) and variance \(\text{Var}(Y_i)=\sigma^2_Y\) where \(0<\sigma^2_Y<\infty\).

The Primal Limit Theorem (CLT) states that, if the sample size \(northward\) goes to infinity, the distribution of the standardized sample average \[ \frac{\overline{Y} - \mu_Y}{\sigma_{\overline{Y}}} = \frac{\overline{Y} - \mu_Y}{\sigma_Y/\sqrt{n}} \ \] becomes arbitrarily well approximated by the standard normal distribution.

The awarding below demonstrates the CLT for the sample average of normally distributed random variables with mean \(v\) and variance \(25^two\). You lot may check the following properties:

- The distribution of the sample average is normal.

- As the sample size increases, the distribution of \(\overline{Y}\) tightens effectually the true mean of \(v\).

- The distribution of the standardized sample average is close to the standard normal distribution for large \(n\).

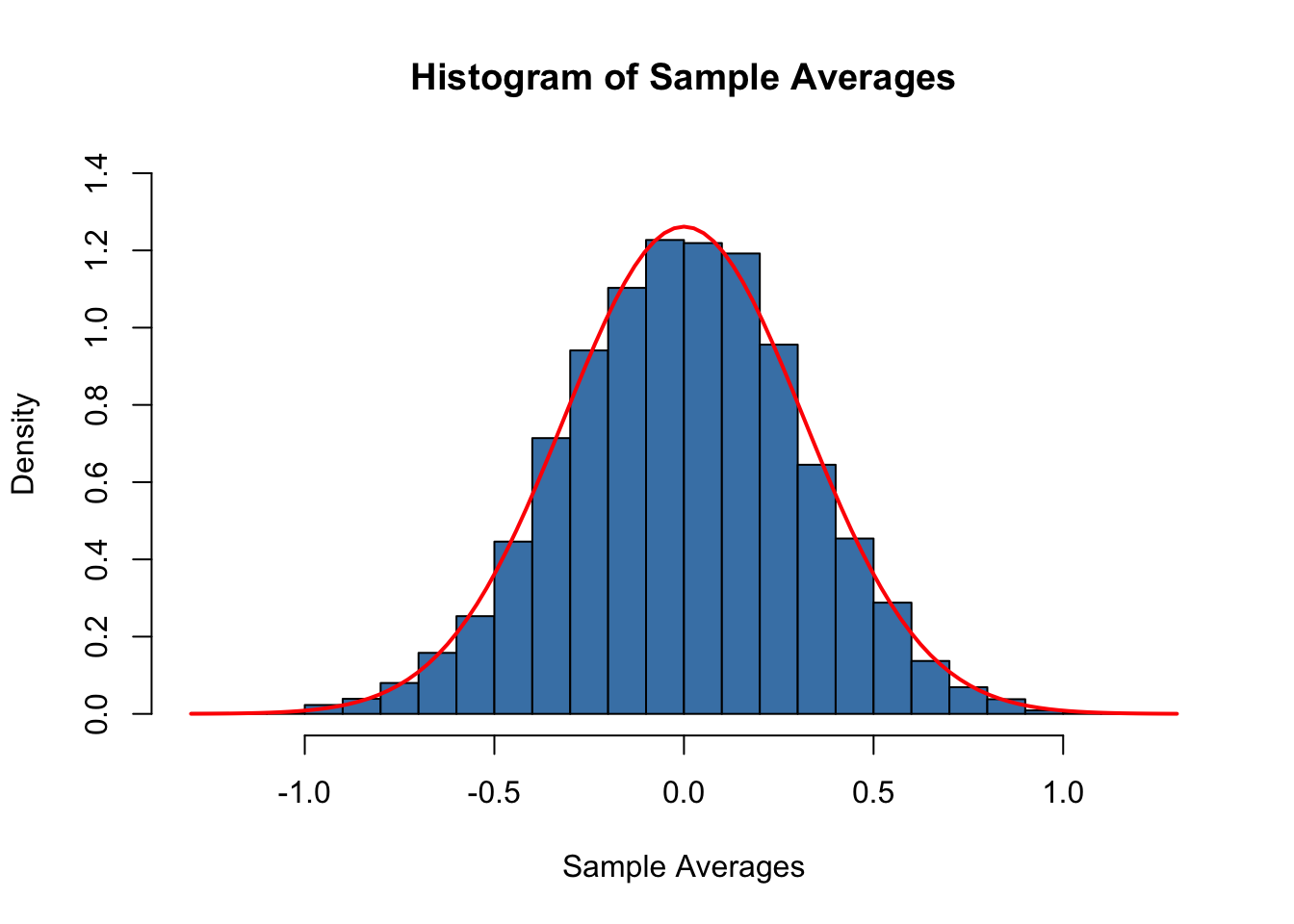

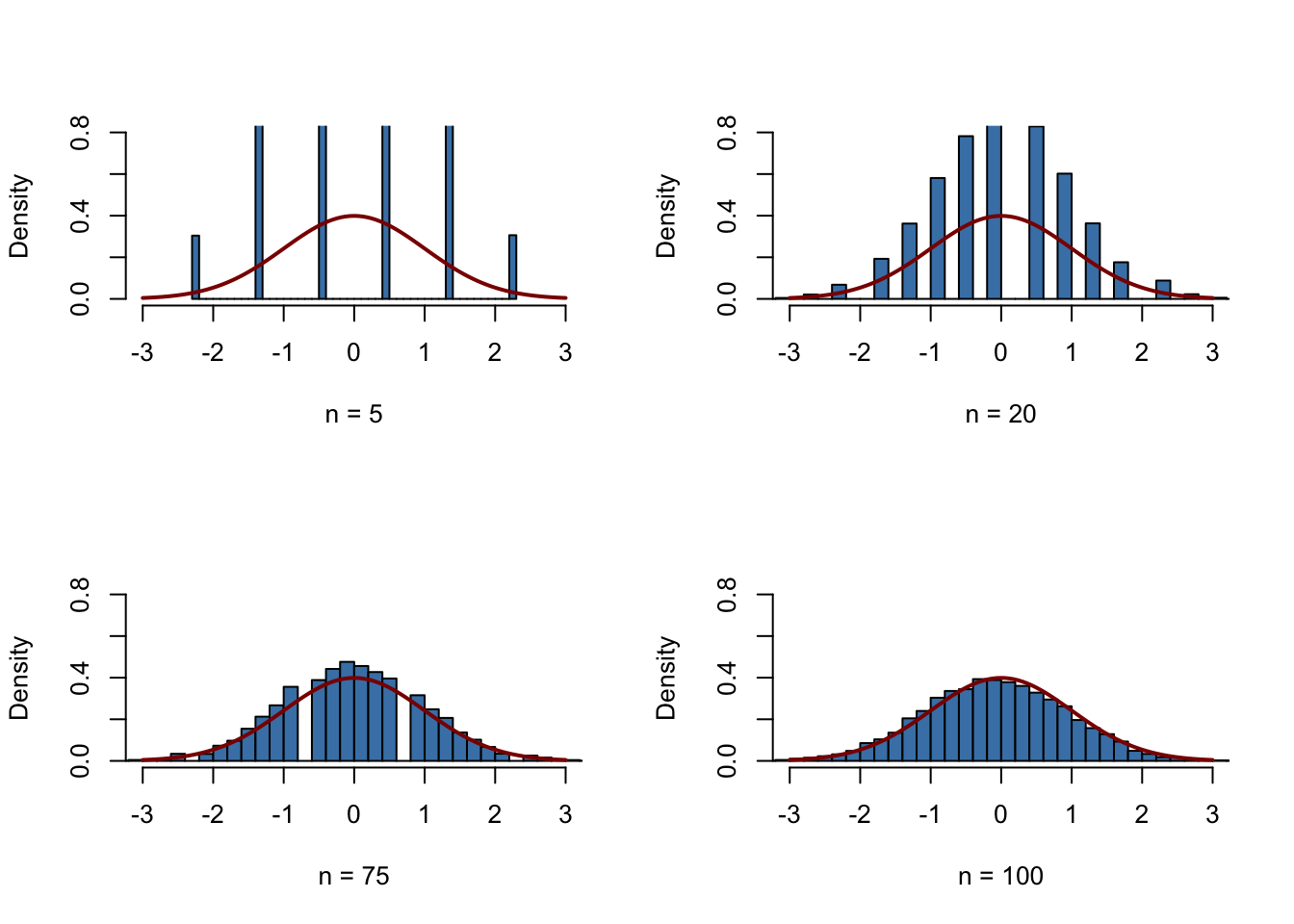

Co-ordinate to the CLT, the distribution of the sample mean \(\overline{Y}\) of the Bernoulli distributed random variables \(Y_i\), \(i=one,...,n\), is well approximated by the normal distribution with parameters \(\mu_Y=p=0.5\) and \(\sigma^2_{Y} = p(1-p)/northward = 0.25/northward\) for large \(north\). Consequently, for the standardized sample mean we conclude that \[ \frac{\overline{Y} - 0.v}{0.5/\sqrt{n}} \tag{2.6}\] should be well approximated by the standard normal distribution \(\mathcal{N}(0,ane)\). We use some other simulation written report to demonstrate this graphically. The thought is every bit follows.

Draw a big number of random samples, \(10000\) say, of size \(due north\) from the Bernoulli distribution and compute the sample averages. Standardize the averages as shown in (2.6). Side by side, visualize the distribution of the generated standardized sample averages by means of a histogram and compare to the standard normal distribution. Repeat this for different sample sizes \(n\) to see how increasing the sample size \(n\) impacts the faux distribution of the averages.

In R, realize this as follows:

-

We start by defining that the next four later on generated figures shall exist drawn in a \(2\times2\) assortment such that they can exist easily compared. This is done by calling

par(mfrow = c(2, two))before generating the figures. -

We define the number of repetitions reps as \(10000\) and create a vector of sample sizes named sample.sizes. We consider samples of sizes \(5\), \(20\), \(75\), and \(100\).

-

Next, we combine 2 for() loops to simulate the data and plot the distributions. The inner loop generates \(10000\) random samples, each consisting of n observations that are drawn from the Bernoulli distribution, and computes the standardized averages. The outer loop executes the inner loop for the different sample sizes n and produces a plot for each iteration.

# subdivide the plot panel into a two-by-2 array par(mfrow = c(2, ii)) # set the number of repetitions and the sample sizes reps <- 10000 sample.sizes <- c(5, 20, 75, 100) # set up seed for reproducibility set.seed(123) # outer loop (loop over the sample sizes) for (north in sample.sizes) { samplemean <- rep(0, reps) #initialize the vector of sample means stdsamplemean <- rep(0, reps) #initialize the vector of standardized sample means # inner loop (loop over repetitions) for (i in i :reps) { x <- rbinom(n, ane, 0.5) samplemean[i] <- mean(x) stdsamplemean[i] <- sqrt(n)*(mean(x) - 0.5)/ 0.five } # plot histogram and overlay the Due north(0,one) density in every iteration hist(stdsamplemean, col = "steelblue", freq = Imitation, breaks = 40, xlim = c(- three, three), ylim = c(0, 0.eight), xlab = paste("due north =", due north), primary = "") curve(dnorm(ten), lwd = two, col = "darkred", add = TRUE) }

Nosotros see that the simulated sampling distribution of the standardized boilerplate tends to deviate strongly from the standard normal distribution if the sample size is small-scale, eastward.m., for \(n=v\) and \(n=x\). However as \(due north\) grows, the histograms approach the standard normal distribution. The approximation works quite well, see \(n=100\).

Source: https://www.econometrics-with-r.org/2-2-RSATDOSA.html

Posted by: haackthipper85.blogspot.com

0 Response to "How To Draw A Random Sample From A Specific Distribution"

Post a Comment